Artificial Intelligence has moved quickly from rule-based systems to models that can understand language, images, and intent. At the centre of this shift is a simple but powerful idea: representing information as vectors. A “Vector Store” is the system that makes those representations usable at scale.

This post explains what a vector store is, how it works, and why it has become a critical component in modern AI architectures.

The Core Idea

A vector store is a database designed to store, index, and retrieve vectors.

A vector is a list of numbers that represents meaning. In AI, these vectors are generated by embedding models. These models convert unstructured data such as text, images, or audio into numerical form so machines can compare and reason about them.

For example, the sentences:

- “Customer cannot log in”

- “User unable to access account”

may look different as text, but when converted into vectors, they sit close together in a multi-dimensional space because they mean similar things.

A vector store allows you to:

- Store these embeddings

- Search them efficiently

- Retrieve the most relevant results based on similarity

This is fundamentally different from traditional keyword search.

Why Traditional Databases Fall Short

Relational databases and standard search engines are excellent for structured data and exact matching. However, they struggle with meaning.

If you search a traditional database for “login issue”, it may miss records labelled “authentication failure” or “access denied”. It relies on exact words or predefined rules.

Vector stores solve this by focusing on semantic similarity rather than literal matches. They allow AI systems to “understand” relationships between data points.

How a Vector Store Works

At a high level, a vector store operates in three stages:

1. Embedding

Raw data is converted into vectors using an embedding model.

Examples:

- Text is turned into sentence embeddings

- Images into feature vectors

- Logs into behavioural patterns

Each piece of data becomes a point in a high-dimensional space.

2. Storage and Indexing

These vectors are stored alongside metadata.

Because vectors can have hundreds or thousands of dimensions, specialised indexing techniques are used. Common approaches include:

- Approximate Nearest Neighbour (ANN)

- Hierarchical Navigable Small Worlds (HNSW)

- Product Quantization

These methods allow fast similarity searches across large datasets.

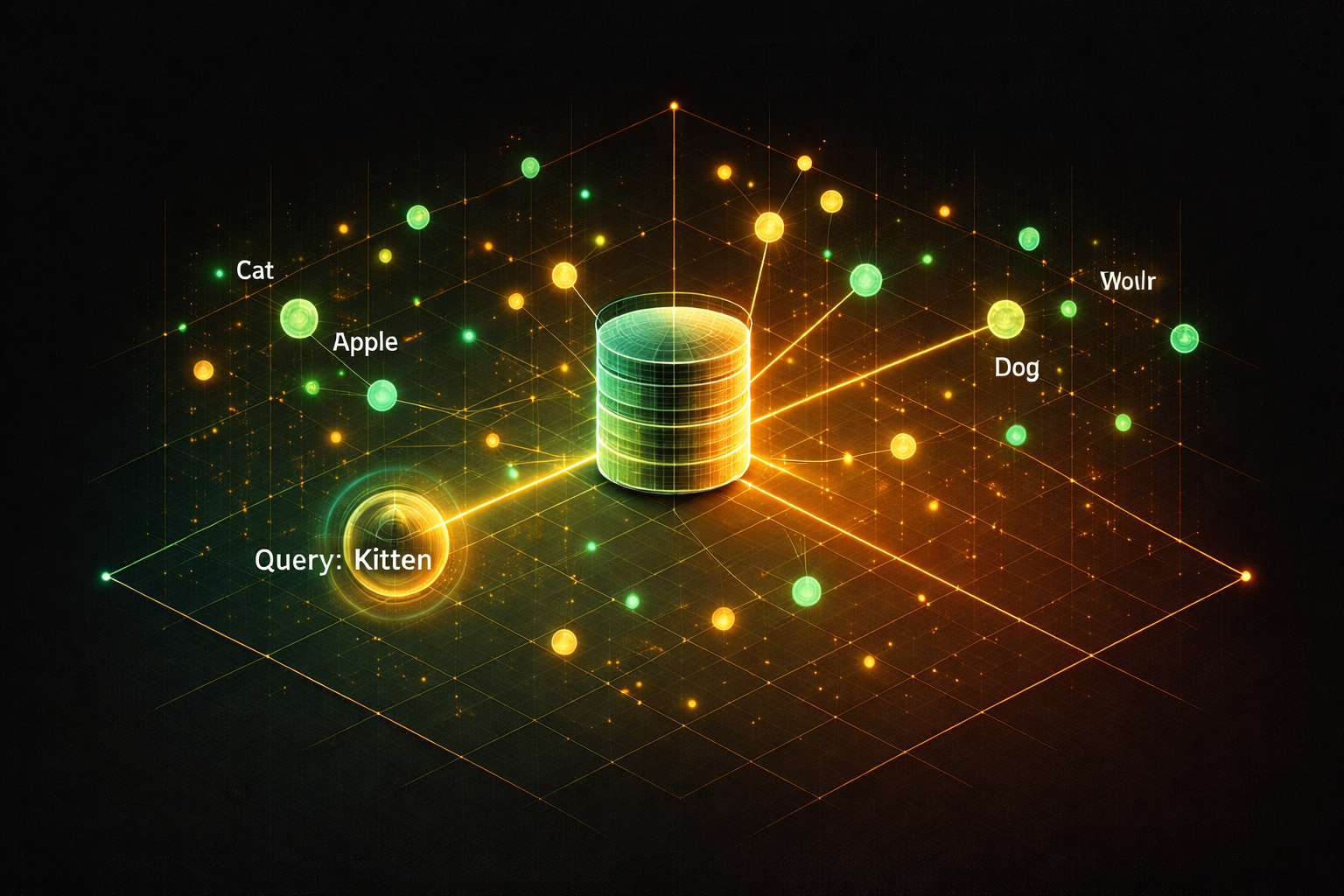

3. Query and Retrieval

When a user submits a query, it is also converted into a vector.

The vector store then finds the closest vectors in the dataset. “Closest” means most similar in meaning, not identical in wording.

The result is a ranked list of relevant items.

A Simple Example

Imagine a support system storing past incidents.

Each incident description is embedded and stored as a vector.

A user asks:

“Why can’t I access my account?”

The system converts this question into a vector and searches for similar vectors. It may retrieve incidents tagged:

- “Login failure due to expired password”

- “User authentication blocked after multiple attempts”

Even though the wording differs, the meaning aligns.

Key Use Cases in AI

Vector stores are now a foundational component in many AI applications.

1. Retrieval-Augmented Generation (RAG)

Large Language Models such as OpenAI GPT models or Claude are powerful but limited by their training data.

RAG solves this by combining LLMs with a vector store.

Process:

- Store enterprise knowledge as embeddings

- Retrieve relevant content at query time

- Inject it into the model prompt

This allows AI to answer questions using current, organisation-specific data.

2. Semantic Search

Instead of keyword search, users can ask natural language questions.

Example:

“Show me recent payment failures in production”

The system retrieves relevant logs, incidents, or tickets even if exact terms do not match.

3. Recommendation Systems

Vector similarity can identify related items.

Examples:

- Products similar to what a user viewed

- Documents related to a current task

- Test environments with similar configurations

4. Anomaly Detection

By comparing vectors over time, systems can identify unusual patterns.

This is useful for:

- Fraud detection

- System monitoring

- Data drift analysis

Where Vector Stores Fit in an AI Architecture

A typical modern AI stack looks like this:

- Data sources: databases, logs, documents

- Embedding model: converts data into vectors

- Vector store: stores and retrieves embeddings

- Application layer: APIs, workflows, orchestration

- LLM: generates responses or actions

The vector store sits between raw data and AI reasoning.

It acts as the memory layer for AI systems.

Popular Vector Store Technologies

Several technologies have emerged to support this pattern:

- Pinecone

- Weaviate

- Milvus

- FAISS

Traditional databases such as PostgreSQL are also evolving with vector extensions.

Each offers different trade-offs in scalability, latency, and operational complexity.

Benefits of Using a Vector Store

Improved Relevance

Results are based on meaning, not keywords.

Flexibility

Works across text, images, and other unstructured data.

Scalability

Designed to handle millions or billions of vectors.

AI Enablement

Unlocks advanced capabilities such as RAG and intelligent search.

Considerations and Challenges

While powerful, vector stores introduce new design considerations.

Embedding Quality

The effectiveness of a vector store depends on the embedding model. Poor embeddings lead to poor results.

Data Freshness

Vectors must be updated when underlying data changes.

Cost and Performance

High-dimensional indexing can be resource intensive.

Governance

Sensitive data embedded into vectors must still comply with security and privacy policies.

This is particularly important when dealing with PII or regulated datasets.

A Practical Perspective

From an enterprise standpoint, a vector store should not be treated as a standalone tool. It is part of a broader architecture.

The real value comes when it is integrated into workflows.

For example:

- Linking vector search to release management insights

- Enabling environment-level knowledge retrieval

- Supporting intelligent automation decisions

This aligns with the concept of a central control layer where data, environments, and processes are connected.

The Bottom Line

A vector store is not just another database. It is a new way of organising and retrieving information based on meaning.

As AI systems become more context-aware, the need for fast, accurate semantic retrieval will only increase.

Vector stores provide the foundation for this capability.

They turn raw data into something AI can reason over, making them essential for any organisation looking to move beyond basic automation and into intelligent systems.

In simple terms:

If large language models are the brain, the vector store is the memory that makes them useful in the real world.